Two ways to skill up your Claude skills

Data is often far from perfect. Missing data points, edge cases, wrong formats and data that’s not correct due to transformations or wrong entry. Fixing these problems is hard, especially in enterprise scale companies. The data goes through loops of databases and large code blocks. To find the data quality issue you have to understand the code, sometimes made years ago. Dealing with large code blocks and repositories isn’t a piece of cake: how do you understand the code from a business perspective? How can we easily visually show what’s happening the data transformation? Where do we have to make a change to solve the data quality issue? Often a data lineage program would be a solution to quickly show where our data is coming from. Finding the source is often the key.

In this article I would like to dive into the use of Claude skills to analyse large code block and to determine the data lineage.

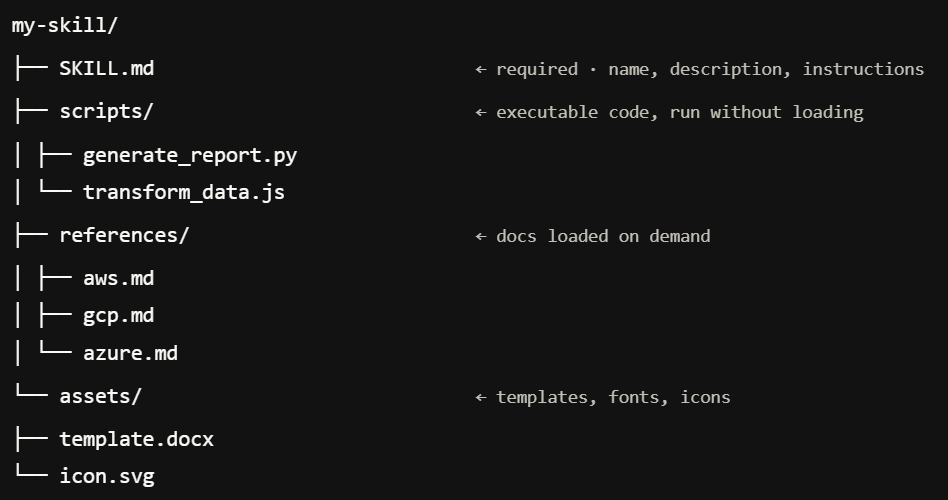

What are Claude skills?

Claude skills are reusable prompts in a specific format. They are created to standardize prompting steps. You specify the name and description such that Claude chat or a Claude agent will understand when to use it. Specifying scripts or reference files support the capabilities of the skills. It will create a specific context the Skill works in.

The difference between a skill and an agent is that an agent will have a more generic context and will reason more broadly. A difference between an MCP and a skill is that the MCP gives connection specifics to tools where Skills explain how to use the tools effectively. Skills are reusable and can be used in multiple agents. They are the building blocks of your agentic workflow. You can find more information about skills in the documentation of Claude:

-

https://docs.claude.com/en/docs/agents-and-tools/agent-skills/overview.md

-

https://docs.claude.com/en/docs/agents-and-tools/agent-skills/best-practices.md

-

https://docs.claude.com/en/docs/claude-code/sub-agents.md

Claude skills in sequence

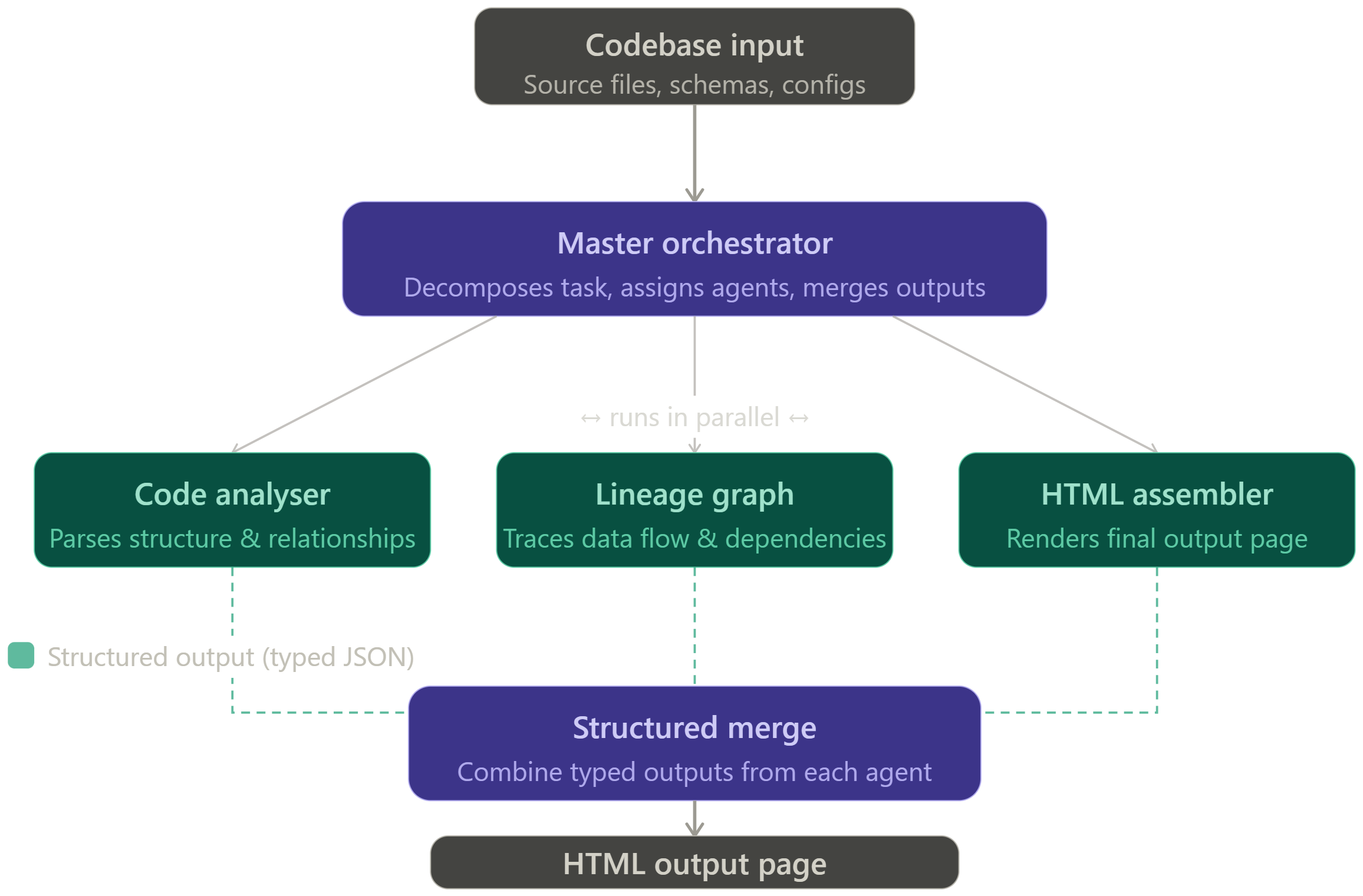

For me the use of skills was linked to sequence multiple skills. When the first skill was finished, the second would pick up with the output created by the first. This way I would have double benefit: prompts are standardized and I only have to initiate the sequence. Before I would need multiple iterations and prompts to determine the data lineage of a code block. Now I just drop in the code and it’s analysing the code first, then create a data lineage graph and lastly shows the lineage as an html page.

However, when I reached a sequence of around 15 skills, it took to long and there was too much variety. To solve this I created agents to orchestrate the thought process and to kick off multiple skills. I created a code analyser agent, an agent that would create a data lineage graph and an agent that would put it all together into an html page. Using agents in combination with skills helped to keep the structured output while maintaining performance and a thorough thought process.

An agent and skill combination

While working on this bigger sequence, I found that its useful to create a very structured output. In Claude documentation its mentioned to either use markdown or JSON. There are two ways to pass the output from skill to skill: (1) using the agent context, so the agent passes it from skill to skill using his thought context (2) the second way to standardize the output even more is by updating a static file. Insert a task per skill to save its output in a shared file. The next agent will read the file as input, perform additional changes an stores its output back into the file again.

Lastly, you can add a status report and pass it along from skill to skill. An example could be a timestamp, a sequence number and a brief description of its actions. This status object can be used by the next skill to understand what happened before and it could be used to debug the steps during the run. This is extremely helpful to optimize your agentic workflow and to enhance the workflow pipeline.

Using Claude to optimize code

The next step is to use the data lineage to find data quality issues. As it already knows the data lineage, it’s helpful to provide recommendations. Breaking down the code into a data lineage structure is necessary for a good recommendation. LLMs need to defined context to provide correct and accurate recommendations.

A future where LLMs are used to automatically detect and optimize data issues is close. A standard control while running a data pipeline should be an LLM check on your data and code. Let the LLM analyse the input and output and find gaps. A structured data lineage skill could just be the first part of this control.